Recent months could be remembered as the moment when predictive artificial intelligence (AI) went mainstream. While prediction algorithms have been in use for decades, the release of applications such as OpenAI’s ChatGPT3 — and its rapid integration with Microsoft’s Bing search engine — might have unleashed the floodgates when it comes to user-friendly AI.

Within weeks of ChatGPT3’s release, it had already attracted 100 million monthly users, many of whom have doubtless already experienced its dark side — from insults and threats to disinformation and a demonstrated ability to write malicious code.

The chatbots that are generating headlines are just the tip of the iceberg. AI for creating text, speech, art and video are progressing rapidly, with far-reaching implications for governance, commerce and civic life. Not surprisingly, capital is flooding into the sector, with governments and companies investing in start-ups to develop and deploy the latest machine-learning tools.

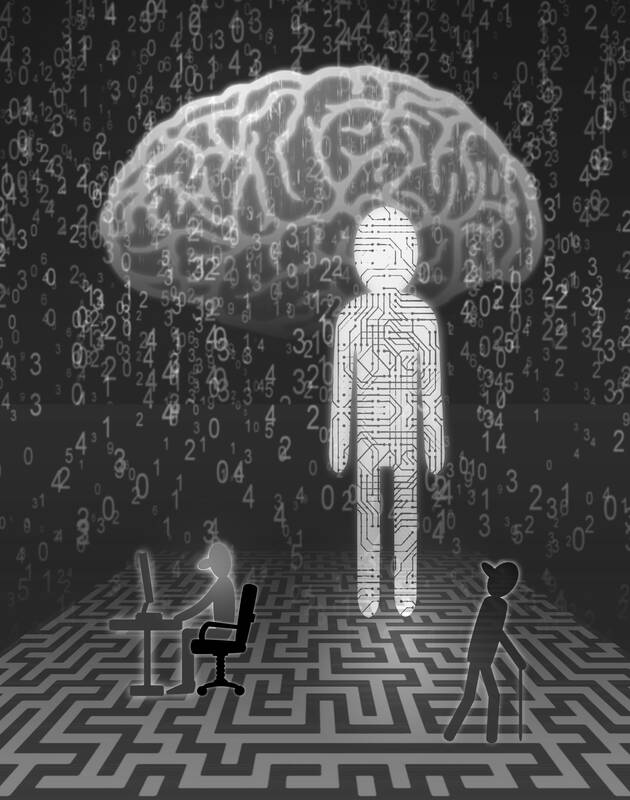

Illustration: Yusha

These new applications would combine historical data with machine learning, natural language processing and deep learning to determine the probability of future events.

Crucially, adoption of the new natural language processing and generative AI would not be confined to the wealthy countries and companies such as Google, Meta and Microsoft that spearheaded their creation.

These technologies are already spreading across low and middle-income settings, where predictive analytics for everything from reducing urban inequality to addressing food security hold tremendous promise for cash-strapped governments, firms and non-governmental organizations seeking to improve efficiency and unlock social and economic benefits.

However, the problem is that there has been insufficient attention given to the potential negative externalities and unintended effects of these technologies. The most obvious risk is that unprecedentedly powerful predictive tools could strengthen authoritarian regimes’ surveillance capacity.

One widely cited example is China’s “social-credit system,” which uses credit histories, criminal convictions, online behavior and other data to assign a score to every person in the country.

Those scores can be used to determine whether someone can secure a loan, access a good school, travel by rail or air, and so forth. Although China’s system is billed as a tool to improve transparency, it doubles as an instrument of social control.

Even when used by ostensibly well-intentioned democratic governments, companies focused on social impact and progressive nonprofits, predictive tools can generate sub-optimal outcomes.

Design flaws in the underlying algorithms and biased data sets can lead to privacy breaches and identity-based discrimination.

This has already become a glaring issue in criminal justice, where predictive analytics routinely perpetuate racial and socio-economic disparities. For example, an AI system built to help US judges assess the likelihood of recidivism erroneously determined that black defendants are at far greater risk of re-offending than white ones.

Concerns about how AI could deepen inequalities in the workplace are also growing. Predictive algorithms have been increasing efficiency and profits in ways that benefit managers and shareholders at the expense of rank-and-file workers — especially in the gig economy.

In all these examples, AI systems are holding up a fun house mirror to society, reflecting and magnifying our biases and inequities.

Digitization tends to exacerbate, rather than ameliorate, existing political, social and economic problems, technology researcher Nanjira Sambuli said.

The enthusiasm to adopt predictive tools must be balanced against informed and ethical consideration of their intended and unintended effects. Where the effects of powerful algorithms are disputed or unknown, the precautionary principle would counsel against deploying them.

AI must not become another domain where decisionmakers ask for forgiveness rather than permission. That is why the UN High Commissioner for Human Rights and others have called for moratoriums on the adoption of AI systems until ethical and human-rights frameworks have been updated to account for their potential harms.

Crafting the appropriate frameworks would require a consensus on the basic principles that should inform the design and use of predictive AI tools.

Fortunately, the race for AI has led to a parallel flurry of research, initiatives, institutes and networks on ethics. While civil society has taken the lead, intergovernmental entities such as the Organisation for Economic Co-operation and Development and UNESCO have also become involved.

The UN has been working on building universal standards for ethical AI since at least 2021. The EU has proposed an AI act — the first such effort by a major regulator — which would block certain uses, such as those resembling China’s social-credit system, and subject other high-risk applications to specific requirements and oversight.

This debate has been concentrated overwhelmingly in North America and Western Europe.

However, low and middle-income countries have their own baseline needs, concerns and social inequities to consider. There is ample research showing that technologies developed by and for markets in advanced economies are often inappropriate for less-developed economies.

If AI tools are simply imported and put into wide use before the necessary governance structures are in place, they could easily do more harm than good. All these issues must be considered to devise truly universal principles for AI governance.

Recognizing these gaps, the think tanks Igarape Institute and New America recently launched a new Global Task Force on Predictive Analytics for Security and Development. The task force is to convene digital-rights advocates, public-sector partners, tech entrepreneurs and social scientists from the Americas, Africa, Asia and Europe, with the goal of defining principles for the use of predictive technologies in public safety, and sustainable development in the Global South.

Formulating these principles and standards is just the first step. The bigger challenge will be to marshal the international, national and subnational collaboration and coordination needed to implement them in law and practice.

In the global rush to develop and deploy new predictive AI tools, harm-prevention frameworks are essential to ensure a secure, prosperous, sustainable and human-centered future.

Robert Muggah, cofounder of the Igarape Institute and the SecDev Group, is a member of the World Economic Forum’s Global Future Council on Cities of Tomorrow and an adviser to the Global Risks Report. Gabriella Seiler is a consultant at the Igarape Institute and a partner and director at Kunumi. Gordon LaForge is a senior policy analyst at New America and a lecturer at the Thunderbird School of Global Management at Arizona State University.

Copyright: Project Syndicate

The central bank and the US Department of the Treasury on Friday issued a joint statement that both sides agreed to avoid currency manipulation and the use of exchange rates to gain a competitive advantage, and would only intervene in foreign-exchange markets to combat excess volatility and disorderly movements. The central bank also agreed to disclose its foreign-exchange intervention amounts quarterly rather than every six months, starting from next month. It emphasized that the joint statement is unrelated to tariff negotiations between Taipei and Washington, and that the US never requested the appreciation of the New Taiwan dollar during the

Since leaving office last year, former president Tsai Ing-wen (蔡英文) has been journeying across continents. Her ability to connect with international audiences and foster goodwill toward her country continues to enhance understanding of Taiwan. It is possible because she can now walk through doors in Europe that are closed to President William Lai (賴清德). Tsai last week gave a speech at the Berlin Freedom Conference, where, standing in front of civil society leaders, human rights advocates and political and business figures, she highlighted Taiwan’s indispensable global role and shared its experience as a model for democratic resilience against cognitive warfare and

The diplomatic dispute between China and Japan over Japanese Prime Minister Sanae Takaichi’s comments in the Japanese Diet continues to escalate. In a letter to UN Secretary-General Antonio Guterres, China’s UN Ambassador Fu Cong (傅聰) wrote that, “if Japan dares to attempt an armed intervention in the cross-Strait situation, it would be an act of aggression.” There was no indication that Fu was aware of the irony implicit in the complaint. Until this point, Beijing had limited its remonstrations to diplomatic summonses and weaponization of economic levers, such as banning Japanese seafood imports, discouraging Chinese from traveling to Japan or issuing

The diplomatic spat between China and Japan over comments Japanese Prime Minister Sanae Takaichi made on Nov. 7 continues to worsen. Beijing is angry about Takaichi’s remarks that military force used against Taiwan by the Chinese People’s Liberation Army (PLA) could constitute a “survival-threatening situation” necessitating the involvement of the Japanese Self-Defense Forces. Rather than trying to reduce tensions, Beijing is looking to leverage the situation to its advantage in action and rhetoric. On Saturday last week, four armed China Coast Guard vessels sailed around the Japanese-controlled Diaoyutai Islands (釣魚台), known to Japan as the Senkakus. On Friday, in what