From bombings and protests to the opening of a new health center, student journalist Baraa Razzouk has been documenting daily life in Idlib, Syria, for years, and posting the videos to his YouTube account.

Yet this month, the 21-year-old started getting automated e-mails from YouTube alerting him that his videos contravened its policy and would be deleted.

As of this month, more than a dozen of his videos had been removed, he said.

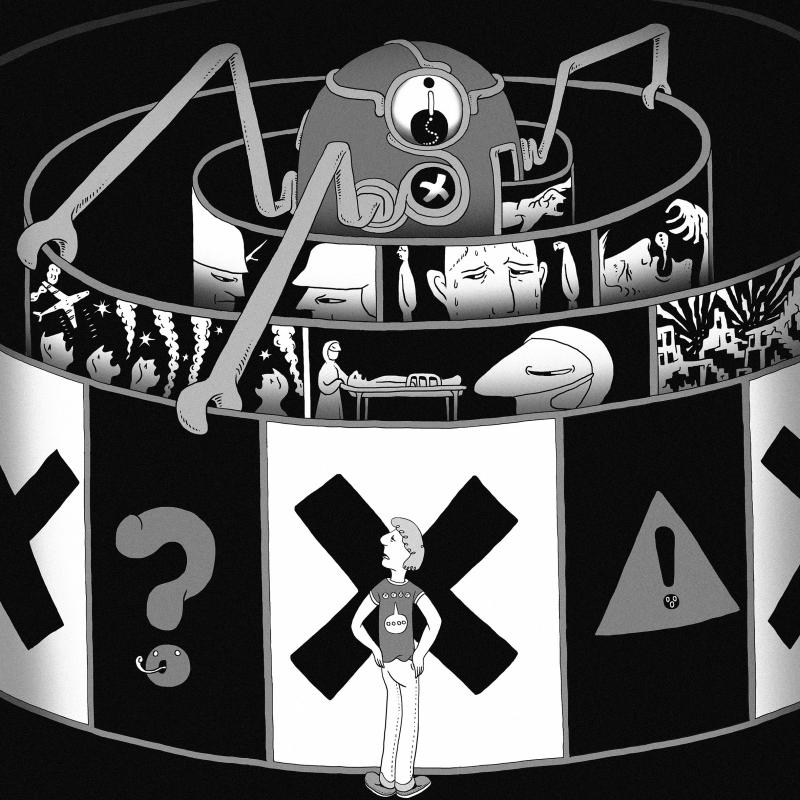

Illustration: Mountain People

“Documenting the [Syrian] protests in videos is really important — also, documenting attacks by regime forces,” he said in a telephone interview. “This is something I had documented for the world and now it’s deleted.”

YouTube, Facebook and Twitter in March said that videos and other content might be erroneously removed for policy contraventions, as the COVID-19 pandemic forced them to empty offices and rely on automated takedown software.

However, those artificial intelligence (AI)-enabled tools risk confusing human rights and historical documentation such as Razzouk’s videos with problematic material such as terrorist content — particularly in war-torn countries such as Syria and Yemen, digital rights activists have said.

“AI is notoriously context-blind,” said Jeff Deutch, a researcher for Syrian Archive, a nonprofit that archives video footage from conflict zones in the Middle East.

“It is often unable to gauge the historical, political or linguistic settings of posts ... human rights documentation and violent extremist proposals are too often indistinguishable,” he said in a telephone interview.

Erroneous takedowns threaten content such as videos that are used as formal evidence of rights violations by international bodies such as the International Criminal Court and the UN, said Dia Kayyali, program manager for tech and advocacy at digital rights group Witness.

“It’s a perfect storm,” Kayyali said.

After the Thomson Reuters Foundation flagged Razzouk’s account to YouTube, a spokesman said that the company had deleted the videos in error, although the removal was not appealed through their internal process.

YouTube has now restored 17 of Razzouk’s videos.

“With the massive volume of videos on our site, sometimes we make the wrong call,” the spokesman said in e-mailed comments. “When it’s brought to our attention that a video has been removed mistakenly, we act quickly to reinstate it.”

In the past few years, social media platforms have come under increased pressure from governments to quickly remove violent content and disinformation from their platforms, raising their reliance on AI systems.

With the help of automated software, YouTube removes millions of videos a year, and Facebook deleted more than 1 billion accounts last year for breaching rules such as not posting terrorist content.

Last year, social media companies pledged to block extremist content following a livestreamed terror attack on Facebook of a gunman killing 51 people at two mosques in Christchurch, New Zealand.

Governments have followed suit, with French President Emmanuel Macron vowing to make France a leader in containing the spread of illicit content and false information on social media platforms.

However, the country’s top court this week rejected most of a draft law that would have compelled social media giants to remove any hateful content within 24 hours.

Firms such as Facebook have also pledged to remove misinformation about the novel coronavirus outbreak that could contribute to imminent physical harm.

These pressures, combined with an increased reliance on AI during the pandemic, puts human rights content in particular jeopardy, Kayyali said.

Social media firms typically do not disclose how frequently their AI tools mistakenly take down content, so the Syrian Archive group has been using its own data to approximate change over time in the rate of deletions of human rights documentation on crimes committed in Syria, which has been battered by nearly a decade of war.

The group flags accounts posting human rights content on social media platforms, and archives the posts on its servers. To approximate the rate of deletions they run a script pinging the original post each month to see if it has been removed.

“Our research suggests that since the beginning of the year, the rate of content takedowns of Syrian human rights documentations on YouTube roughly doubled” from 13 percent to 20 percent, Deutch said, calling the increase “unprecendented.”

Last month, Syrian Archive detected that more than 350,000 videos on YouTube had disappeared — up from 200,000 in May last year, including videos of aerial attacks, protests and destruction of civilians homes in Syria.

Deutch said that he had seen content takedowns in other war-torn countries in the region, including Yemen and Sudan.

“Users in conflict zones are more vulnerable,” he said.

Other groups, including Amnesty International and Witness, have warned of the trend elsewhere, including in sub-Saharan Africa.

Syrian Archive was not able to test for takedowns at Facebook, because outside researchers are restricted from the platform’s application programming interface, but earlier this month Syrians began using the hashtag “Facebook is fighting the Syrian revolution” to flag similar content takedowns on the platform.

Last month, Yahya Daoud, a Syrian humanitarian worker with the White Helmets emergency response group, shared a post and a photograph showing a woman who died in a 2012 massacre by the forces of Syrian President Bashar al-Assad in the Houla region.

By the end of the month, Daoud said that his account — which he had used since 2011 to document his life in Syria — was automatically deleted without explanation.

“I was depending on Facebook to be an archive for me,” he said.

“So many memories have been lost: the death of my friends, the day I became displaced, the death of my mother,” he said, adding that he had unsuccessfully tried to appeal the decision through Facebook’s automated complaints system.

Facebook did not respond to requests for comment.

Researchers say that they are only able to detect a small slice of erroneous content takedowns.

“We don’t know how many people are trying to speak and we aren’t hearing them,” said Alexa Koenig, executive director of the University of California Berkeley’s Human Rights Center.

“These algorithms are grabbing the content before we even see it,” said Koenig, whose center uses images and videos posted from conflict zones such as Syria to document human rights abuses and build cases.

YouTube said that 80 percent of videos flagged by its AI were deleted before anyone had seen them in the second quarter of last year.

Koenig worries that the erasure of these videos could jeopardize ongoing investigations around the world.

In 2017, the International Criminal Court issued its first arrest warrant that rested primarily on social media evidence, after video footage emerged on Facebook of Libyan National Army commander Mahmoud al-Werfalli.

The video purportedly showed him shooting dead 10 blindfolded prisoners at the site of a car bombing in Benghazi. He is still at large.

Koenig worries that this kind of documentation is now under threat.

“The danger is much higher than it was just a few months ago,” she said. “It’s a sickening feeling to know we aren’t close to where we need to be in preserving this content.”

What began on Feb. 28 as a military campaign against Iran quickly became the largest energy-supply disruption in modern times. Unlike the oil crises of the 1970s, which stemmed from producer-led embargoes, US President Donald Trump is the first leader in modern history to trigger a cascading global energy crisis through direct military action. In the process, Trump has also laid bare Taiwan’s strategic and economic fragilities, offering Beijing a real-time tutorial in how to exploit them. Repairing the damage to Persian Gulf oil and gas infrastructure could take years, suggesting that elevated energy prices are likely to persist. But the most

Taiwan should reject two flawed answers to the Eswatini controversy: that diplomatic allies no longer matter, or that they must be preserved at any cost. The sustainable answer is to maintain formal diplomatic relations while redesigning development relationships around transparency, local ownership and democratic accountability. President William Lai’s (賴清德) canceled trip to Eswatini has elicited two predictable reactions in Taiwan. One camp has argued that the episode proves Taiwan must double down on support for every remaining diplomatic ally, because Beijing is tightening the screws, and formal recognition is too scarce to risk. The other says the opposite: If maintaining

Chinese Nationalist Party (KMT) Chairwoman Cheng Li-wun (鄭麗文), during an interview for the podcast Lanshuan Time (蘭萱時間) released on Monday, said that a US professor had said that she deserved to be nominated for the Nobel Peace Prize following her meeting earlier this month with Chinese President Xi Jinping (習近平). Cheng’s “journey of peace” has garnered attention from overseas and from within Taiwan. The latest My Formosa poll, conducted last week after the Cheng-Xi meeting, shows that Cheng’s approval rating is 31.5 percent, up 7.6 percentage points compared with the month before. The same poll showed that 44.5 percent of respondents

India’s semiconductor strategy is undergoing a quiet, but significant, recalibration. With the rollout of India Semiconductor Mission (ISM) 2.0, New Delhi is signaling a shift away from ambition-driven leaps toward a more grounded, capability-led approach rooted in industrial realities and institutional learning. Rather than attempting to enter the most advanced nodes immediately, India has chosen to prioritize mature technologies in the 28-nanometer to 65-nanometer range. That would not be a retreat, but a strategic alignment with domestic capabilities, market demand and global supply chain gaps. The shift carries the imprimatur of Indian Prime Minister Narendra Modi, indicating that the recalibration is