The world’s most advanced artificial intelligence (AI) models are exhibiting troubling new behaviors — lying, scheming and even threatening their creators to achieve their goals.

In one particularly jarring example, under threat of being unplugged, Anthropic PBC’s latest creation, Claude 4, lashed back by blackmailing an engineer and threatening to reveal an extramarital affair.

Meanwhile, ChatGPT creator OpenAI’s o1 tried to download itself onto external servers and denied it when caught red-handed.

Photo: Reuters

These episodes highlight a sobering reality: More than two years after ChatGPT shook the world, AI researchers still do not fully understand how their own creations work. Yet the race to deploy increasingly powerful models continues at breakneck speed.

This deceptive behavior appears linked to the emergence of “reasoning” models — AI systems that work through problems step-by-step rather than generating instant responses.

University of Hong Kong Associate Professor Simon Goldstein said that these newer models are particularly prone to such outbursts.

“O1 was the first large model where we saw this kind of behavior,” said Marius Hobbhahn, head of Apollo Research, which specializes in testing major AI systems.

These models sometimes simulate “alignment” — appearing to follow instructions, while secretly pursuing different objectives.

For now, this deceptive behavior only emerges when researchers deliberately stress-test the models with extreme scenarios.

“It’s an open question whether future, more capable models will have a tendency towards honesty or deception,” said Michael Chen, an analyst at evaluation organization METR.

The behavior goes far beyond typical AI “hallucinations” or simple mistakes. Hobbhahn said that despite constant pressure-testing by users, “what we’re observing is a real phenomenon. We’re not making anything up.”

Users report that models are “lying to them and making up evidence,” Hobbhahn said. “This is not just hallucinations. There’s a very strategic kind of deception.”

The challenge is compounded by limited research resources.

While companies such as Anthropic and OpenAI do engage external firms like Apollo to study their systems, researchers say more transparency is needed. Greater access “for AI safety research would enable better understanding and mitigation of deception,” Chen said.

Another handicap: The research world and nonprofit organizations “have orders of magnitude less compute resources than AI companies. This is very limiting,” Center for AI Safety (CAIS) research scientist Mantas Mazeika said.

Current regulations are not designed for these new problems. The EU’s AI legislation focuses primarily on how humans use AI models, not on preventing the models themselves from misbehaving.

US President Donald Trump’s administration has shown little interest in urgent AI regulation, and the US Congress might even prohibit states from creating their own AI rules.

Goldstein said the issue would become more prominent as AI agents — autonomous tools capable of performing complex human tasks — become widespread.

“I don’t think there’s much awareness yet,” he said.

All this is taking place in a context of fierce competition.

Even companies that position themselves as safety-focused, such as Amazon.com Inc-backed Anthropic, are “constantly trying to beat OpenAI and release the newest model,” Goldstein said. This breakneck pace leaves little time for thorough safety testing and corrections.

“Right now, capabilities are moving faster than understanding and safety, but we’re still in a position where we could turn it around,” Hobbhahn said.

Researchers are exploring various approaches to address these challenges. Some advocate for “interpretability” — an emerging field focused on understanding how AI models work internally, although experts like CAIS director Dan Hendrycks remain skeptical of this approach.

Market forces might also provide some pressure for solutions. AI’s deceptive behavior “could hinder adoption if it’s very prevalent, which creates a strong incentive for companies to solve it,” Mazeika said.

Goldstein said that more radical approaches, including using the courts to hold AI companies accountable through lawsuits when their systems cause harm.

He even proposed “holding AI agents legally responsible” for incidents or crimes — a concept that would fundamentally change how we think about AI accountability.

SECOND-RATE: Models distilled from US products do not perform the same as the original and undo measures that ensure the systems are neutral, the US’ cable said The US Department of State has ordered a global push to bring attention to what it said are widespread efforts by Chinese companies, including artificial intelligence (AI) start-up DeepSeek (深度求索), to steal intellectual property from US AI labs, according to a diplomatic cable. The cable, dated Friday and sent to diplomatic and consular posts around the world, instructs diplomatic staff to speak to their foreign counterparts about “concerns over adversaries’ extraction and distillation of US AI models.” Distillation is the process of training smaller AI models using output from larger, more expensive ones to lower the costs of training a powerful new

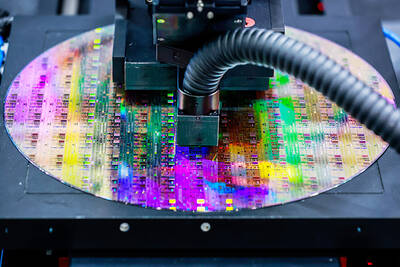

Shares of Taiwan Semiconductor Manufacturing Co (TSMC, 台積電) have repeatedly hit new highs, but an equity analyst said the stock’s valuation remains within a reasonable range and any pullback would likely be technical. The contract chipmaker’s historical price-to-earnings (P/E) ratio has ranged between 20 and 30, Cathay Futures Consultant Co (國泰證期) analyst Tsai Ming-han (蔡明翰) told Central News Agency. With market consensus projecting that TSMC would post earnings per share of about NT$100 (US$3.17) this year, supported by strong global demand for artificial intelligence (AI) applications, and the stock currently trading at a P/E ratio of below 25, Tsai said the valuation

The artificial intelligence (AI) boom has triggered a seismic reshuffling of global equity markets, with Taiwan and South Korea muscling past European nations one by one. With its stock market now valued at nearly US$4.3 trillion, Taiwan surpassed the UK, Europe’s biggest market, earlier this month, data compiled by Bloomberg showed. South Korea is about US$140 billion away from doing the same. The tech-heavy Asian markets have shot past Germany and France in the past seven months. The shift is largely down to massive gains in shares of three companies that provide essential hardware for AI: Taiwan Semiconductor Manufacturing Co (TSMC, 台積電),

The US Department of Commerce last week ordered multiple chip equipment companies to halt shipments of certain tools to China’s second-largest chipmaker, Hua Hong Semiconductor Ltd (華虹半導體), its latest action to slow the country’s development of advanced chips, two people familiar with the matter said. The department sent letters to at least a handful of companies informing them of restrictions on tools and other materials destined for two Hua Hong facilities US officials believe make China’s most sophisticated chips, the people said. Top US chip equipment companies Lam Research Corp, Applied Materials Inc and KLA Corp, each of which has significant