No one sells the future more masterfully than the tech industry.

Its proponents say that we will all live in the “metaverse,” build our financial infrastructure on “Web3” and power our lives with “artificial intelligence” (AI). All three of these terms are mirages that have raked in billions of dollars, despite bite back by reality.

“AI” in particular conjures the notion of thinking machines, but no machine can think, and no software is truly intelligent. The phrase alone might be one of the most successful marketing terms of all time.

Last week, OpenAI announced GPT-4, a major upgrade to the technology underpinning ChatGPT. The system sounds even more humanlike than its predecessor, naturally reinforcing notions of its intelligence.

However, GPT-4 and other large language models like it are simply mirroring databases of text — close to 1 trillion words for the previous model — whose scale is difficult to contemplate. Helped along by an army of humans reprograming it with corrections, the models glom words together based on probability. That is not intelligence.

These systems are trained to generate text that sounds plausible, yet they are marketed as new oracles of knowledge that can be plugged into search engines. That is foolhardy when GPT-4 continues to make errors, and it was only a few weeks ago that Microsoft and Google suffered embarrassing demos in which their new search engines glitched on facts.

Not helping matters are terms such as “neural networks” and “deep learning,” which only bolster the idea that these programs are humanlike.

Neural networks are not copies of the human brain in any way; they are only loosely inspired by its workings. Long-term efforts to try to replicate the human brain with its about 85 billion neurons have all failed. The closest scientists have come is to emulating the brain of a worm, with 302 neurons.

We need a different lexicon that does not propagate magical thinking about computer systems, and does not absolve the people designing those systems from their responsibilities.

What is a better alternative? Reasonable technologists have tried for years to replace “AI” with “machine learning systems,” but that does not trip off the tongue in quite the same way.

Stefano Quintarelli, a former Italian lawmaker and technologist, came up with another alternative, “Systemic Approaches to Learning Algorithms and Machine Inferences” (SALAMI), to underscore the ridiculousness of the questions people have been posing about “AI”: Is “SALAMI” sentient? Will “SALAMI” ever have supremacy over humans?

The most hopeless attempt at a semantic alternative is probably the most accurate: “software.”

“But what is wrong with using a little metaphorical shorthand to describe technology that seems so magical?” I hear you ask.

The answer is that ascribing intelligence to machines gives them undeserved independence from humans, and it abdicates their creators of responsibility for their impact.

If we see ChatGPT as “intelligent,” then we are less inclined to try and hold San Francisco-based start-up OpenAI, its creator, to account for its inaccuracies and biases. It also creates a fatalistic compliance among people who suffer technology’s damaging effects. Although “AI” will not take your job or plagiarize your artistic creations — other people will.

The issue is ever more pressing now that companies from Facebook parent Meta Platforms to Snapchat and Morgan Stanley are rushing to plug chatbots, as well as text and image generators, into their systems.

Spurred by its new arms race with Google, Microsoft is putting OpenAI’s language model technology, still largely untested, into its most popular business apps, including Word, Outlook and Excel.

“Copilot will fundamentally change how people work with AI and how AI works with people,” Microsoft said of its new feature.

Howevr, for customers, the promise of working with intelligent machines is almost misleading.

AI is “one of those labels that expresses a kind of utopian hope rather than present reality, somewhat as the rise of the phrase ‘smart weapons’ during the first Gulf War implied a bloodless vision of totally precise targeting that still isn’t possible,” said British journalist Steven Poole, author of the book ***Unspeak***, about the dangerous power of words and labels.

Margaret Mitchell, a computer scientist who was fired by Google after publishing a paper that criticized the biases in large language models, has reluctantly described her work as being based in “AI” over the past few years.

“Before ... people like me said we worked on ‘machine learning.’ That’s a great way to get people’s eyes to glaze over,” she told a conference panel last week.

Her former Google colleague and founder of the Distributed Artificial Intelligence Research Institute, Timnit Gebru, said she also only started saying “AI” in about 2013, when “it became the thing to say.”

“It’s terrible, but I’m doing this too,” Mitchell said. “I’m calling everything that I touch ‘AI’ because then people will listen to what I’m saying.”

Unfortunately, “AI” is so embedded in our vocabulary that it will be almost impossible to shake, the obligatory air quotes difficult to remember.

At the very least, we should remind ourselves of how reliant such systems are on human managers who should be held accountable for their side effects.

Poole said he prefers to call chatbots such as ChatGPT and image generators such as Midjourney “giant plagiarism machines,” as they mainly recombine prose and pictures that were originally created by humans.

“I’m not confident it will catch on,” he said.

In more ways than one, we really are stuck with “AI.”

Parmy Olson is a Bloomberg Opinion columnist covering technology. She is a former reporter for the ***Wall Street Journal and Forbes*.

This column does not necessarily reflect the opinion of the editorial board or Bloomberg LP and its owners.

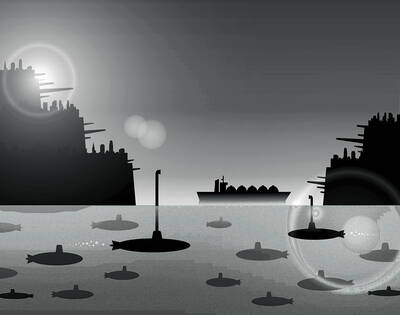

In the event of a war with China, Taiwan has some surprisingly tough defenses that could make it as difficult to tackle as a porcupine: A shoreline dotted with swamps, rocks and concrete barriers; conscription for all adult men; highways and airports that are built to double as hardened combat facilities. This porcupine has a soft underbelly, though, and the war in Iran is exposing it: energy. About 39,000 ships dock at Taiwan’s ports each year, more than the 30,000 that transit the Strait of Hormuz. About one-fifth of their inbound tonnage is coal, oil, refined fuels and liquefied natural gas (LNG),

On Monday, the day before Chinese Nationalist Party (KMT) Chairwoman Cheng Li-wun (鄭麗文) departed on her visit to China, the party released a promotional video titled “Only with peace can we ‘lie flat’” to highlight its desire to have peace across the Taiwan Strait. However, its use of the expression “lie flat” (tang ping, 躺平) drew sarcastic comments, with critics saying it sounded as if the party was “bowing down” to the Chinese Communist Party (CCP). Amid the controversy over the opposition parties blocking proposed defense budgets, Cheng departed for China after receiving an invitation from the CCP, with a meeting with

Chinese Nationalist Party (KMT) Chairwoman Cheng Li-wun (鄭麗文) is leading a delegation to China through Sunday. She is expected to meet with Chinese President Xi Jinping (習近平) in Beijing tomorrow. That date coincides with the anniversary of the signing of the Taiwan Relations Act (TRA), which marked a cornerstone of Taiwan-US relations. Staging their meeting on this date makes it clear that the Chinese Communist Party (CCP) intends to challenge the US and demonstrate its “authority” over Taiwan. Since the US severed official diplomatic relations with Taiwan in 1979, it has relied on the TRA as a legal basis for all

Taiwan ranks second globally in terms of share of population with a higher-education degree, with about 60 percent of Taiwanese holding a post-secondary or graduate degree, a survey by the Organisation for Economic Co-operation and Development showed. The findings are consistent with Ministry of the Interior data, which showed that as of the end of last year, 10.602 million Taiwanese had completed post-secondary education or higher. Among them, the number of women with graduate degrees was 786,000, an increase of 48.1 percent over the past decade and a faster rate of growth than among men. A highly educated population brings clear advantages.