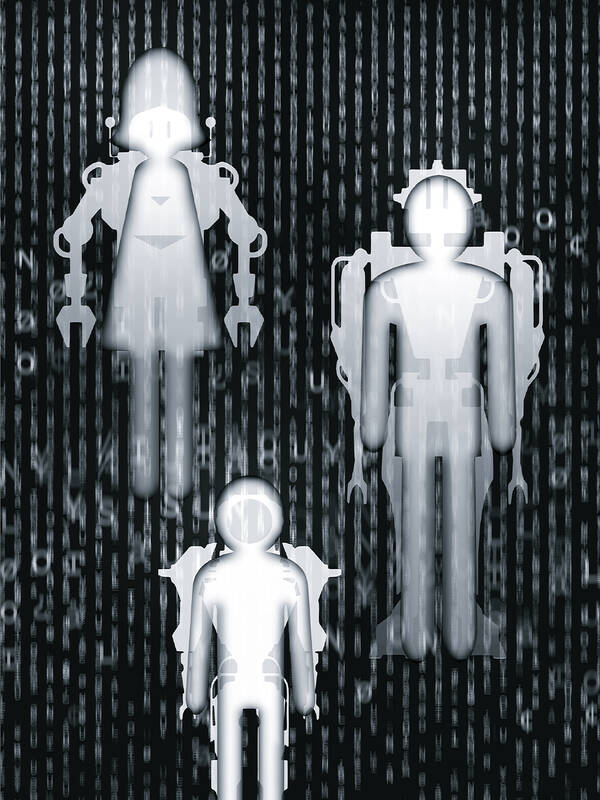

Artificial intelligence (AI) has been moving so fast that even scientists are finding it hard to keep up. In the past year, machine learning algorithms have started to generate rudimentary movies and stunning fake photographs.

They are even writing code. In the future, people are likely to look back on this year as the year AI shifted from processing information to creating content as well as many humans.

Yet what if people also look back on it as the year AI took a step toward the destruction of the human species?

Illustration: Yusha

As hyperbolic and ridiculous as that sounds, public figures from Microsoft cofounder Bill Gates, SpaceX and Tesla CEO Elon Musk, and late physicist Stephen Hawking to British computer pioneer Alan Turing have expressed concerns about the fate of humans in a world where machines surpass them in intelligence, with Musk saying that AI is becoming more dangerous than nuclear warheads.

After all, humans do not treat less-intelligent species particularly well, so who is to say that computers, trained ubiquitously on data that reflects all the facets of human behavior, would not “place their goals ahead of ours” as legendary computer scientist Marvin Minsky once said.

Refreshingly, there is some good news. More scientists are seeking to make deep learning systems more transparent and measurable. That momentum must not stop. As these programs become ever more influential in financial markets, social media and supply chains, technology firms must start prioritizing AI safety over capability.

Across the world’s major AI labs last year, roughly 100 full-time researchers were focused on building safe systems, said last year’s State of AI report produced annually by London venture capital investors Ian Hogarth and Nathan Banaich.

Their report for this year found there are still only about 300 researchers working full-time on AI safety.

“It’s a very low number,” Hogarth said during a Twitter Spaces discussion this week on the threat of AI. “Not only are very few people working on making these systems aligned, but it’s also kind of a Wild West.”

Hogarth was referring to how in the past year a flurry of AI tools and research has been produced by open-source groups, who say super-intelligent machines should not be controlled and built in secret by a few large companies, but created out in the open.

For instance, the community-driven organization EleutherAI in August last year developed a public version of a powerful tool that could write realistic comments and essays on nearly any subject, called GPT-Neo.

The original tool, called GPT-3, was developed by OpenAI, a company cofounded by Musk and largely funded by Microsoft that offers limited access to its powerful systems.

This year, several months after OpenAI impressed the AI community with a revolutionary image-generating system called DALL-E 2, an open-sourced firm called Stable Diffusion released its own version of the tool to the public, free of charge.

One of the benefits of open-source software is that by being out in the open, a greater number of people are constantly probing it for inefficiencies. That is why Linux has historically been one of the most secure operating systems available to the public.

However, throwing powerful AI systems out into the open also raises the risk that they could be misused. If AI is as potentially damaging as a virus or nuclear contamination, then perhaps it makes sense to centralize its development. After all, viruses are scrutinized in bio-safety labs and uranium is enriched in carefully constrained environments.

Although research into viruses and nuclear power are overseen by regulation, and with governments trailing the rapid pace of AI, there are still no clear guidelines for its development.

“We’ve almost got the worst of both worlds,” Hogarth said.

AI risks misuse by being built out in the open, but no one is overseeing what is happening when it is created behind closed doors either.

For now at least, it is encouraging to see the spotlight growing on AI alignment, a growing field that refers to designing AI systems that are “aligned” with human goals.

Leading AI companies such as Alphabet Inc’s DeepMind and OpenAI have multiple teams working on AI alignment, and many researchers from those firms have gone on to launch their own start-ups, some of which are focused on making AI safe.

These include San Francisco-based Anthropic, whose founding team left OpenAI and raised US$580 million from investors earlier this year, and London-based Conjecture, which was recently backed by the founders of Github, Stripe and FTX Trading.

Conjecture is operating under the assumption that AI will reach parity with human intelligence in the next five years, and that its trajectory spells catastrophe for the human species.

Asked why AI might want to hurt humans, Conjecture CEO Connor Leahy said: “Imagine humans want to flood a valley to build a hydroelectric dam, and there is an anthill in the valley. This won’t stop the humans from their construction, and the anthill will promptly get flooded.”

“At no point did any humans even think about harming the ants. They just wanted more energy, and this was the most efficient way to achieve that goal,” he said. “Analogously, autonomous AI’s will need more energy, faster communication and more intelligence to achieve their goals.”

To prevent that dark future, the world needs a “portfolio of bets,” including scrutinizing deep learning algorithms to better understand how they make decisions, and trying to endow AI with more human-like reasoning, Leahy said.

Even if Leahy’s fears seem overblown, it is clear that AI is not on a path that is entirely aligned with human interests. Just look at some of the recent efforts to build chatbots.

Microsoft abandoned its 2016 bot Tay which learned from interacting with Twitter users, after it posted racist and sexually charged messages within hours of being released.

In August, Meta Platforms released a chatbot that said Donald Trump was still the US president, having been trained on public text on the Internet.

No one knows if AI will wreak havoc on financial markets or torpedo the food supply chain one day, but it could pit human beings against one another through social media, something that is arguably already happening.

The powerful AI systems recommending posts to people on Twitter and Facebook are aimed at juicing people’s engagement, which inevitably means serving up content that provokes outrage or misinformation.

When it comes to “AI alignment,” changing those incentives would be a good place to start.

Parmy Olson is a Bloomberg Opinion columnist covering technology, and a former reporter for the Wall Street Journal and Forbes.

This column does not necessarily reflect the opinion

of the editorial board or Bloomberg LP and its owners.

The White House’s decision to take a 9.9 percent stake in Intel Corp is looking like very shrewd business indeed. Since the government bought in at US$20.47 a share last August, the US chipmaker’s surging stock price has delivered the US a US$43 billion return. One of the reasons the investment has so far proved so sound is that the White House has made sure of it. According to The Wall Street Journal, Howard personally pushed deals on Intel’s behalf with some of the most lucrative clients imaginable. They include Nvidia Corp, the company at the heart of the AI

In a Taiwanese university classroom, a lecturer asks in English: “Can anyone give me an example from Taiwan?” Students look down. No one answers. After class, one student writes on the course platform in Mandarin: “I understood the concept, but I didn’t know how to answer in English.” That moment highlights a key issue in Taiwan’s English-medium instruction (EMI) reform: It is not just about more English-taught courses, but whether students can learn, participate and belong. EMI expansion is part of the Bilingual 2030 policy and the Ministry of Education’s BEST Program, aiming to improve English ability, support EMI teaching

A single photograph can cut through a lot of noise, but it can also be used to misrepresent the truth. At the very least, it can concentrate the mind on something that requires further investigation. On Monday last week, Ma Ying-jeou Foundation CEO Tai Hsia-ling (戴遐齡) and former National Security Council secretary-general King Pu-tsung (金溥聰) held a news conference in which they showed a photograph of former foundation CEO Hsiao Hsu-tsen (蕭旭岑), now Chinese Nationalist Party (KMT) deputy chairman. In the image Hsiao is seated next to Xiamen Taiwan Businessmen Association chairman Han Ying-huan (韓螢煥). The two men were holding

The Ministry of the Interior, working with the navy and coast guard, is organizing Taiwan’s first joint exercise simulating escort tankers carrying liquefied natural gas (LNG) and oil through a Chinese blockade. The drills simulate fuel transport along three maritime corridors leading toward Japan, the Philippines and the US. Deputy Minister of the Interior Sawyer Mars (馬士元) said that a blockade of the Taiwan Strait would amount to “almost a 100 percent blockade of the regional energy supply.” Minister of National Defense Wellington Koo said planning to counter a blockade is standard practice in Taipei. While the exercise is limited in