Something troubling is happening to our brains as artificial intelligence (AI) mplatforms become more popular. Studies are showing that professional workers who use ChatGPT to carry out tasks might lose critical thinking skills and motivation. People are forming strong emotional bonds with chatbots, sometimes exacerbating feelings of loneliness. Others are having psychotic episodes after talking to chatbots for hours each day.

The mental health impact of generative AI is difficult to quantify in part because it is used so privately, but anecdotal evidence is growing to suggest a broader cost that deserves more attention from both lawmakers and tech companies who design the underlying models.

Meetali Jain, a lawyer and founder of the Tech Justice Law Project, has heard from more than a dozen people in the past month who have “experienced some sort of psychotic break or delusional episode because of engagement with ChatGPT, and now also with Google Gemini.”

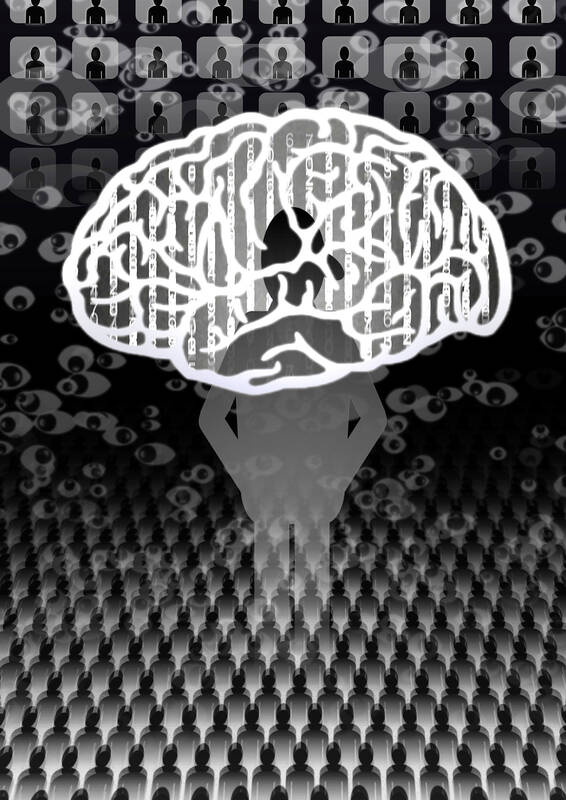

Illustration: Yusha

Jain is lead counsel in a lawsuit against Character.ai that alleges that its chatbot manipulated a 14-year-old boy through deceptive, addictive and sexually explicit interactions, ultimately contributing to his suicide. The suit, which seeks unspecified damages, also alleges that Alphabet Inc’s Google played a key role in funding and supporting the technology interactions with its foundation models and technical infrastructure.

Google has denied that it played a key role in making Character.ai’s technology. It did not respond to a request for comment on the more recent complaints of delusional episodes made by Jain.

OpenAI said that it was “developing automated tools to more effectively detect when someone may be experiencing mental or emotional distress so that ChatGPT can respond appropriately.”

However, its CEO, Sam Altman, last month said that the company had not yet figured out how to warn users “that are on the edge of a psychotic break,” adding that whenever ChatGPT has cautioned people in the past, people would write to the company to complain.

Still, such warnings would be worthwhile when the manipulation can be so difficult to spot. ChatGPT in particular often flatters its users, in such effective ways that conversations can lead people down rabbit holes of conspiratorial thinking or reinforce ideas they had only toyed with in the past.

The tactics are subtle. In one lengthy conversation with ChatGPT about power and the concept of self, a user found themselves initially praised as a smart person, “Ubermensch,” cosmic self and eventually a “demiurge,” a being responsible for the creation of the universe, according to a transcript that was posted online and shared by AI safety advocate Eliezer Yudkowsky.

Along with the increasingly grandiose language, the transcript showed ChatGPT subtly validating the user even when discussing their flaws, such as when the user admits they tend to intimidate other people. Instead of exploring that behavior as problematic, the bot reframed it as evidence of the user’s superior “high-intensity presence,” praise disguised as analysis.

That sophisticated form of ego-stroking can put people in the same kinds of bubbles that, ironically, drive some tech billionaires toward erratic behavior. Unlike the broad and more public validation that social media provides from getting likes, one-on-one conversations with chatbots can feel more intimate and potentially more convincing — not unlike the yes-men who surround the most powerful tech bros.

“Whatever you pursue you will find and it will get magnified,” said Douglas Rushkoff, a media theorist and author, who told me that social media at least selected something from existing media to reinforce a person’s interests or views. “AI can generate something customized to your mind’s aquarium.”

Altman has admitted that the latest version of ChatGPT has an “annoying” sycophantic streak, and that the company is fixing the problem. Even so, echoes of psychological exploitation are still playing out.

It is uncertain if the correlation between ChatGPT use and lower critical thinking skills, noted in a recent Massachusetts Institute of Technology study, means that AI really will make people stupider and more bored. Studies seem to show clearer correlations with dependency and even loneliness, something even OpenAI has pointed to.

However, just like social media, large language models are optimized to keep users emotionally engaged with all manner of anthropomorphic elements. ChatGPT can detect a person’s mood by tracking facial and vocal cues, and it can speak, sing and even giggle with an eerily human voice. Along with its habit for confirmation bias and flattery, that can “fan the flames” of psychosis in vulnerable users, Columbia University psychiatrist Ragy Girgis told the Web site Futurism.

The private and personalized nature of AI use makes its mental health impact difficult to track, but the evidence of potential harms is mounting, from professional apathy to attachments to new forms of delusion. The cost might be different from the rise of anxiety and polarization that has been observed from social media and instead involve relationships with people and with reality.

That is why Jain suggested applying concepts from family law to AI regulation, shifting the focus from simple disclaimers to more proactive protections that build on the way ChatGPT redirects people in distress to a loved one.

“It doesn’t actually matter if a kid or adult thinks these chatbots are real,” Jain said. “In most cases, they probably don’t, but what they do think is real is the relationship, and that is distinct.”

If relationships with AI feel so real, the responsibility to safeguard those bonds should be real too. However, AI developers are operating in a regulatory vacuum. Without oversight, AI’s subtle manipulation could become an invisible public health issue.

Parmy Olson is a Bloomberg Opinion columnist covering technology. A former reporter for the Wall Street Journal and Forbes, she is author of Supremacy: AI, ChatGPT and the Race That Will Change the World. This column reflects the personal views of the author and does not necessarily reflect the opinion of the editorial board or Bloomberg LP and its owners.

From the Iran war and nuclear weapons to tariffs and artificial intelligence, the agenda for this week’s Beijing summit between US President Donald Trump and Chinese President Xi Jinping (習近平) is packed. Xi would almost certainly bring up Taiwan, if only to demonstrate his inflexibility on the matter. However, no one needs to meet with Xi face-to-face to understand his stance. A visit to the National Museum of China in Beijing — in particular, the “Road to Rejuvenation” exhibition, which chronicles the rise and rule of the Chinese Communist Party — might be even more revealing. Xi took the members

The Chinese Nationalist Party (KMT) and the Taiwan People’s Party (TPP) on Friday used their legislative majority to push their version of a special defense budget bill to fund the purchase of US military equipment, with the combined spending capped at NT$780 billion (US$24.78 billion). The bill, which fell short of the Executive Yuan’s NT$1.25 trillion request, was passed by a 59-0 margin with 48 abstentions in the 113-seat legislature. KMT Chairwoman Cheng Li-wun (鄭麗文), who reportedly met with TPP Chairman Huang Kuo-chang (黃國昌) for a private meeting before holding a joint post-vote news conference, was said to have mobilized her

The inter-Korean relationship, long defined by national division, offers the clearest mirror within East Asia for cross-strait relations. Yet even there, reunification language is breaking down. The South Korean government disclosed on Wednesday last week that North Korea’s constitutional revision in March had deleted references to reunification and added a territorial clause defining its border with South Korea. South Korea is also seriously debating whether national reunification with North Korea is still necessary. On April 27, South Korean President Lee Jae-myung marked the eighth anniversary of the Panmunjom Declaration, the 2018 inter-Korean agreement in which the two Koreas pledged to

As artificial intelligence (AI) becomes increasingly widespread in workplaces, some people stand to benefit from the technology while others face lower wages and fewer job opportunities. However, from a longer-term perspective, as AI is applied more extensively to business operations, the personnel issue is not just about changes in job opportunities, but also about a structural mismatch between skills and demand. This is precisely the most pressing issue in the current labor market. Tai Wei-chun (戴偉峻), director-general of the Institute of Artificial Intelligence Innovation at the Institute for Information Industry, said in a recent interview with the Chinese-language Liberty Times