Newsflash: the Internet doesn’t exist. If you think there is just one thing called “the Internet” with a single logic and set of values — rather than a variety of different networked technologies, each with its own character and challenges — and that the rest of the world must be reshaped around it, then you are an “Internet-centrist.” If you think the messiness and inefficiency of political and cultural life are problems that should be fixed using technology, then you are a “solutionist.” And if you think that the age of Twitter and online videos of sneezing cats is so unlike anything that has gone before that we must tear up the rule book of civilization, then you are an “epochalist.” Such coinages are one of the drive by amusements of reading Evgeny Morozov, who, since his first book, The Net Delusion, has become one of our most penetrating and brilliantly sardonic critics of techno-utopianism.

He certainly has some colorful adversaries. One is Jeff Jarvis, a new-media cyberhustler and consultant who is serially wrong about the near future, and seemingly cannot bear to hear any criticism of his adored Silicon Valley corporations. Appearing on the BBC earlier this year after Facebook had been hacked, he accused his interviewer of spreading “technopanic,” insisted the whole story was “crap,” and said: “This interview shouldn’t exist.” Afterwards, he tweeted: “The BBC can kiss my ass,” and “Fuck you, BBC.”

Among Morozov’s other targets are Amazon chief Jeff Bezos, with his “populist rage against institutions” (except his own); LinkedIn supremo Reid Hoffman, who has perpetrated a book-shaped product entitled The Start-Up of You; Google’s Eric Schmidt, who believes that an algorithm could one day tell you what is the “Best music from Lady Gaga;” Microsoft engineer Gordon Bell, lifelogger extraordinaire and exemplary lunatic of the mindset that holds that Truth, in the form of perfect data recall, is the absolute social value; and the games-will-save-the-world theorist Jane McGonigal, whose work Morozov likens to “a bad parody of Mitt Romney.”

But Morozov’s attacks go deeper than a righteous ridicule — he also interrogates the intellectual foundations of the cybertheorists, and finds that, often, they have cherry-picked ideas from the scholarly literature that are at best highly controversial in their own fields. His readings in this vein of Clay Shirky, Steven Johnson, David Weinberger and numerous other cyberintellectuals are suavely devastating.

We must, Morozov argues forcefully, place today’s arguments in a broader context. “To talk about gamification” — the management-theory fad that seeks to apply videogame-style motivations and rewards to real-world practices — “without also discussing BF Skinner’s behaviorism,” he writes, is “misguided.” Here the Belarus-born author also justifiably plays an autobiographical trump card: “As someone who grew up in the final years of the Soviet Union, even I remember the penchant that Soviet managers had for gamification: students were shipped to the fields to harvest wheat or potatoes, and since the motivation was lacking, they too were assigned points and badges.”

The cyberhustlers are constantly declaring Year Zero and demanding that society be reformed according to the demands of “the Internet.” But their understanding of the institutions they dream of seeing torn down — politics, the media and now even university education — is superficial, as is their understanding of “the Internet” itself, whose secretive, privately-owned corporations are nothing like as “open” as their cheerleaders insist everything else must henceforth be. (Another of today’s serious digital critics, Jaron Lanier, emphasizes this point in his latest book, Who Owns the Future? ) The important and admirably fulfilled purpose of Morozov’s book, then, is to argue, as he finally sums up: “that there are good reasons not to run our politics as a startup … that there are good reasons to value subjective but high-quality criticism, even if it doesn’t stem from the ‘wisdom of crowds’ [… and] that numbers often tell us less than we think and quantification as such might actually thwart reforms.”

Quantification, in the form of “Big Data,” is the subject of Viktor Mayer-Schonberger and Kenneth Cukier’s initially more celebratory book, Big Data: A Revolution That Will Transform How We Live, Work and Think. Now that we can collect and analyze vast quantities of data — often, all or nearly all the relevant data rather than just statistical samples — wonderful things can happen. Google predicts the spread of flu in near-real-time by analyzing searches; engineers foresee the failure of engine parts that wirelessly phone home; and Walmart notices that, just before a hurricane hits, sales of Pop Tarts increase. That there is a certain bathos to the progression of these examples is to be expected in an era that does not differentiate too pedantically between what is good for business and what is good for people.

Mayer-Schonberger and Cukier laudably demolish some of the more ludicrous big-data fantasies — for example, the claim by former Wired editor Chris Anderson that big data in science means “the end of theory” — but they also choose not to draw some arguably important distinctions. Is there, perhaps, a difference between “data” and “information” and “knowledge?” Might it be useful to distinguish between which articles a computer program has determined are “popular” on the Internet, and which are actually worth reading?

The dark side of big data, according to the authors, lies in surveillance — in communist East Germany, they point out, the Stasi were aspiring big-data fanatics — and in the alarming prospect of Minority Report-style pre-emptive policing. (According to a study too recent to make it into either book, Facebook “likes” can already be used to accurately predict “sexual orientation, ethnicity, religious and political views, personality traits, intelligence, happiness, use of addictive substances, parental separation, age and gender.”) We could sleepwalk into a “dictatorship of data,” where the algorithms mining the data for actionable recommendations are inscrutable and unaccountable “black boxes.” So, these authors conclude, we need a new cadre of “algorithmists,” people who scrutinize code for its obscured political choices.

Morozov, too, calls for “algorithmic auditors.” (Already, he points out, a single Californian company determines automatically what will count as hate speech and obscenity in the comment systems of thousands of Web sites.) More imaginatively, he also points out many possible consequences of the social engineers’ techno-fixes. “Would self-driving cars,” he wonders pointedly, “result in inferior public transportation as more people took up driving?” If you can measure and upload your health, diet and fitness data to be “shared” with insurance companies, then you’ll get cheaper insurance, say Mayer-Schonberger and Cukier cheerfully. But wait, says Morozov, such individual decisions don’t take place in a vacuum: “If I choose to track and publicize my health, and you choose not to, then sooner or later your decision to do nothing might be seen as tacit acknowledgment that you have something to hide.” These are, then, social and political problems, and ones that the mantra of individual choice cyborgized through shiny new technologies will often answer in ways that harm the already vulnerable.

In one amazing possible future, this newspaper’s Web site would know, thanks to your aggregated personal data, whether you are already a fan of Jeff Jarvis and Clay Shirky: if so, it would then serve to you an alternative version of this review that scolded Evgeny Morozov for his curmudgeonly hatred of inevitable progress. Happily, we are not there yet, and we can still argue along with him about what sort of future we want. Data-dissidents of the world, unite: you have nothing to lose but your targeted adverts.

In late October of 1873 the government of Japan decided against sending a military expedition to Korea to force that nation to open trade relations. Across the government supporters of the expedition resigned immediately. The spectacle of revolt by disaffected samurai began to loom over Japanese politics. In January of 1874 disaffected samurai attacked a senior minister in Tokyo. A month later, a group of pro-Korea expedition and anti-foreign elements from Saga prefecture in Kyushu revolted, driven in part by high food prices stemming from poor harvests. Their leader, according to Edward Drea’s classic Japan’s Imperial Army, was a samurai

The following three paragraphs are just some of what the local Chinese-language press is reporting on breathlessly and following every twist and turn with the eagerness of a soap opera fan. For many English-language readers, it probably comes across as incomprehensibly opaque, so bear with me briefly dear reader: To the surprise of many, former pop singer and Democratic Progressive Party (DPP) ex-lawmaker Yu Tien (余天) of the Taiwan Normal Country Promotion Association (TNCPA) at the last minute dropped out of the running for committee chair of the DPP’s New Taipei City chapter, paving the way for DPP legislator Su

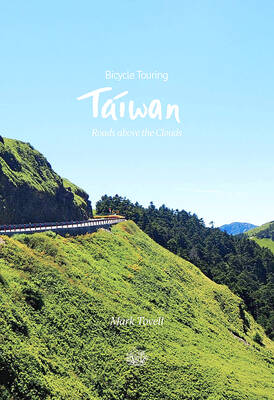

It’s hard to know where to begin with Mark Tovell’s Taiwan: Roads Above the Clouds. Having published a travelogue myself, as well as having contributed to several guidebooks, at first glance Tovell’s book appears to inhabit a middle ground — the kind of hard-to-sell nowheresville publishers detest. Leaf through the pages and you’ll find them suffuse with the purple prose best associated with travel literature: “When the sun is low on a warm, clear morning, and with the heat already rising, we stand at the riverside bike path leading south from Sanxia’s old cobble streets.” Hardly the stuff of your

Located down a sideroad in old Wanhua District (萬華區), Waley Art (水谷藝術) has an established reputation for curating some of the more provocative indie art exhibitions in Taipei. And this month is no exception. Beyond the innocuous facade of a shophouse, the full three stories of the gallery space (including the basement) have been taken over by photographs, installation videos and abstract images courtesy of two creatives who hail from the opposite ends of the earth, Taiwan’s Hsu Yi-ting (許懿婷) and Germany’s Benjamin Janzen. “In 2019, I had an art residency in Europe,” Hsu says. “I met Benjamin in the lobby