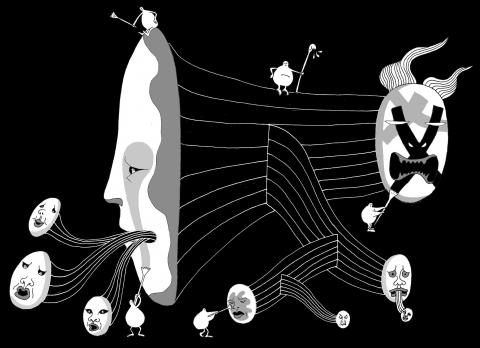

Mark Zuckerberg, the co-founder and chief executive of Facebook, likes to say that his Web site brings people together, helping to make the world a better place, but Facebook is not a utopia and when it comes up short, Dave Willner tries to clean it up.

Dressed in Facebook’s quasi-official uniform of jeans, a T-shirt and flip-flops, the 26-year-old Willner hardly looks like a cop on the beat. Yet he and his colleagues on Facebook’s hate and harassment team are part of a virtual police squad charged with taking down content that is illegal or violates Facebook’s terms of service. That puts them on the front line of the debate over free speech on the Internet.

That role came into sharp focus last week as the controversy about WikiLeaks boiled over on the Web, with coordinated attacks on major corporate and government sites perceived to be hostile to WikiLeaks.

Facebook took down a page used by WikiLeaks supporters to organize hacking attacks on the sites of such companies, including PayPal and MasterCard. It said the page violated the terms of service, which prohibit material that is hateful, threatening or pornographic, or incites violence or illegal acts, but it did not remove WikiLeaks’ own Facebook pages.

Facebook’s decision in the WikiLeaks matter illustrates the complexities that the company grapples with, on issues as diverse as that controversy, verbal bullying among teenagers, gay-baiting and religious intolerance.

With Facebook’s prominence on the Web — its more than 500 million members upload more than 1 billion pieces of content a day — the site’s role as an arbiter of free speech is likely to become even more pronounced.

“Facebook has more power in determining who can speak and who can be heard around the globe than any Supreme Court justice, any king or any president,” said Jeffrey Rosen, a law professor at The George Washington University who has written about free speech on the Internet. “It is important that Facebook is exercising its power carefully and protecting more speech rather than less.”

However, Facebook rarely pleases everyone. Any piece of content — a photograph, video, page or even a message between two individuals — could offend somebody. Decisions by the company not to remove material related to Holocaust denial or pages critical of Islam and other religions, for example, have annoyed advocacy groups and prompted some foreign governments to temporarily block the site.

Some critics say Facebook does not do enough to prevent certain abuses, like bullying, and may put users at risk with lax privacy policies. They also say the company is often too slow to respond to problems.

For example, a page lampooning and, in some instances, threatening violence against an 11-year-old girl from Orlando, Florida, who had appeared in a music video, was still up last week, months after users reported the page to Facebook. The girl’s mother, Christa Etheridge, said she had been in touch with law enforcement authorities and was hoping the offenders would be prosecuted.

“I’m highly upset that Facebook has allowed this to go on repeatedly and to let it get this far,” she said.

A Facebook spokesman said the company had left the page up because it did not violate its terms of service, which allow criticism of a public figure. The spokesman said that by appearing in a band’s video, the girl had become a public figure and that the threatening comments had not been posted until a few days ago. Those comments, and the account of the user who had posted them, were removed after the New York Times inquired about them.

Facebook says it is constantly working to improve its tools to report abuse and trying to educate users about bullying. It says it responds as fast as it can to the roughly 2 million reports of potentially abusive content that its users flag every week.

“Our intent is to triage to make sure we get to the high-priority, high-risk and high-visibility items most quickly,” said Joe Sullivan, Facebook’s chief security officer.

In early October, Willner and his colleagues spent more than a week dealing with one high-risk, highly visible case — rogue citizens of Facebook’s world had posted anti-gay messages and threats of violence on a page inviting people to remember Tyler Clementi and other gay teenagers who have committed suicide, on so-called Spirit Day, Oct. 20.

Working with colleagues in California and in Dublin, they tracked down the accounts of the offenders and shut them down. Then, using an automated technology to tap Facebook’s graph of connections between members, they tracked down more profiles for people who, as it turned out, had also been posting violent messages.

“Most of the hateful content was coming from fake profiles,” said James Mitchell, who is Willner’s supervisor and leads the team.

He said that because most of these profiles, created by people he called “trolls” were connected to those of other trolls, Facebook could track down and block an entire network relatively quickly.

Using the system, Willner and his colleagues silenced dozens of troll accounts and the page became usable again, but trolls are repeat offenders and it took Willner and his colleagues nearly 10 days of monitoring the page around the clock to take down more than 7,000 profiles that kept surfacing to attack the Spirit Day event page.

Most abuse incidents are not nearly as prominent or public as the defacing of the Spirit Day page, which had nearly 1.5 million members. As with schoolyard taunts, they often happen among a small group of people, hidden from casual view.

On a morning last month, Nick Sullivan, a member of the hate and harassment team, watched as reports of bullying incidents scrolled across his screen, full of mind-numbing vulgarity.

Emily looks like a brother. (Deleted). Grady is with Dave. (Deleted). Ronald is the biggest loser. (Deleted). As attacks on specific people who are not public figures, these all violated the terms of service.

“There’s definitely some crazy stuff out there, but you can do thousands of these in a day,” Nick Sullivan said.

Nancy Willard, director of the Center for Safe and Responsible Internet Use, which advises parents and teachers on Internet safety, said her organization frequently received complaints that Facebook does not quickly remove threats against individuals. Jim Steyer, executive director of Common Sense Media, a nonprofit group based in San Francisco, also said that many instances of abuse seemed to fall through the cracks.

“Self-policing can take some time and by then a lot of the damage may already be done,” he said.

Facebook maintains it is doing its best.

“In the same way that efforts to combat bullying offline are not 100 percent successful, the efforts to stop people from saying something offensive about another person online are not complete either,” Nick Sullivan said.

Facebook faces even thornier challenges when policing activity that is considered political by some and illegal by others, such as the publication of secret diplomatic cables obtained by WikiLeaks.

This year, for example, the company declined to take down pages related to “Everybody Draw Mohammed Day,” an Internet-wide protest to defend free speech that surfaced in repudiation of death threats received by two cartoonists who had drawn pictures of the prophet Mohammed. A lot of the discussion on Facebook involved people in Islamic countries debating with people in the West about why the images offended.

Facebook’s team worked to separate the political discussion from the attacks on specific people or Muslims.

“There were people on the page that were crossing the line, but the page itself was not crossing the line,” Mitchell said.

Facebook’s refusal to shut down the debate caused its entire site to be blocked in Pakistan and Bangladesh for several days.

Facebook has also sought to walk a delicate line on Holocaust denial. The company has generally refused to block Holocaust denial material, but has worked with human rights groups to take down some content linked to organizations or groups, like the government of Iran, for which Holocaust denial is part of a larger campaign against Jews.

“Obviously we disagree with them on Holocaust denial,” said Rabbi Abraham Cooper, associate dean of the Simon Wiesenthal Center.

However, Cooper said Facebook had done a better job than many other major Web sites in developing a thoughtful policy on hate and harassment.

The soft-spoken Willner, who on his own Facebook page describes his political views as “turning swords into plowshares and spears into pruning hooks,” makes for an unlikely enforcer.

An archeology and anthropology major in college, he said that while he loved his job, he did not love watching so much of the underbelly of Facebook.

“I handle it by focusing on the fact that what we do matters,” he said.

Two sets of economic data released last week by the Directorate-General of Budget, Accounting and Statistics (DGBAS) have drawn mixed reactions from the public: One on the nation’s economic performance in the first quarter of the year and the other on Taiwan’s household wealth distribution in 2021. GDP growth for the first quarter was faster than expected, at 6.51 percent year-on-year, an acceleration from the previous quarter’s 4.93 percent and higher than the agency’s February estimate of 5.92 percent. It was also the highest growth since the second quarter of 2021, when the economy expanded 8.07 percent, DGBAS data showed. The growth

In the intricate ballet of geopolitics, names signify more than mere identification: They embody history, culture and sovereignty. The recent decision by China to refer to Arunachal Pradesh as “Tsang Nan” or South Tibet, and to rename Tibet as “Xizang,” is a strategic move that extends beyond cartography into the realm of diplomatic signaling. This op-ed explores the implications of these actions and India’s potential response. Names are potent symbols in international relations, encapsulating the essence of a nation’s stance on territorial disputes. China’s choice to rename regions within Indian territory is not merely a linguistic exercise, but a symbolic assertion

More than seven months into the armed conflict in Gaza, the International Court of Justice ordered Israel to take “immediate and effective measures” to protect Palestinians in Gaza from the risk of genocide following a case brought by South Africa regarding Israel’s breaches of the 1948 Genocide Convention. The international community, including Amnesty International, called for an immediate ceasefire by all parties to prevent further loss of civilian lives and to ensure access to life-saving aid. Several protests have been organized around the world, including at the University of California Los Angeles (UCLA) and many other universities in the US.

Every day since Oct. 7 last year, the world has watched an unprecedented wave of violence rain down on Israel and the occupied Palestinian Territories — more than 200 days of constant suffering and death in Gaza with just a seven-day pause. Many of us in the American expatriate community in Taiwan have been watching this tragedy unfold in horror. We know we are implicated with every US-made “dumb” bomb dropped on a civilian target and by the diplomatic cover our government gives to the Israeli government, which has only gotten more extreme with such impunity. Meantime, multicultural coalitions of US