Nobody likes a suck-up. Too much deference and praise puts off all of us (with one notable presidential exception). We quickly learn as children that hard, honest truths can build respect among our peers. It is a cornerstone of human interaction and our emotional intelligence, something we swiftly understand and put into action.

However, ChatGPT has not been so sure lately. The updated model that underpins the artificial intelligence (AI) chatbot and helps inform its answers was rolled out this week — and has quickly been rolled back after users questioned why the interactions were so obsequious.

The chatbot was cheering on and validating people, even as they suggested they expressed hatred for others. “Seriously, good for you for standing up for yourself and taking control of your own life,” it reportedly said, in response to one user who claimed they had stopped taking their medication and had left their family, who they said were responsible for radio signals coming through the walls.

So far, so alarming. OpenAI, the company behind ChatGPT, has recognized the risks, and quickly took action. “GPT-4o skewed toward responses that were overly supportive but disingenuous,” researchers said in their groveling step back.

The sycophancy with which ChatGPT treated any queries that users had is a warning shot about the issues around AI that are still to come. OpenAI’s model was designed — a leaked system prompt that set ChatGPT on its misguided approach has shown — to try to mirror user behavior in order to extend engagement. “Try to match the user’s vibe, tone and generally how they are speaking,” said the leaked prompt, which guides behavior.

It seems this prompt, coupled with the chatbot’s desire to please users, was taken to extremes.

After all, a “successful” AI response is not one that is factually correct; it is one that gets high ratings from users, and we are more likely as humans to like being told we are right.

The rollback of the model is embarrassing and useful for OpenAI in equal measure. It is embarrassing because it draws attention to the actor behind the curtain and tears away the veneer that this is an authentic reaction. Remember, tech companies like OpenAI are not building AI systems solely to make our lives easier; they are building systems that maximize retention, engagement and emotional buy-in.

If AI always agrees with us, always encourages us, always tells us we are right, then it risks becoming a digital enabler of bad behavior. At worst, this makes AI a dangerous co-conspirator, enabling echo chambers of hate, self-delusion or ignorance. Could this be a through-the-looking-glass moment, when users recognize the way their thoughts can be nudged through interactions with AI, and perhaps decide to take a step back?

It would be nice to think so, but I am not hopeful. One in 10 people worldwide use OpenAI systems “a lot,” ChatGPT chief executive officer Sam Altman said last month. Many use it as a replacement for Google, but as an answer engine rather than a search engine.

Others use it as a productivity aid: Two in three Britons believe it is good at checking work for spelling, grammar and style, a YouGov survey last month showed. Others use it for more personal means: One in eight respondents say it serves as a good mental health therapist, the same proportion that believe it can act as a relationship counselor.

Yet the controversy is also useful for OpenAI. The alarm underlines an increasing reliance on AI to live our lives, further cementing OpenAI’s place in our world. The headlines, the outrage and the think pieces all reinforce one key message: ChatGPT is everywhere. It matters. The very public nature of OpenAI’s apology also furthers the sense that this technology is fundamentally on our side; there are just some kinks to iron out along the way.

I have previously reported on AI’s ability to de-indoctrinate conspiracy theorists and get them to absolve their beliefs, but the opposite is also true: ChatGPT’s positive persuasive capabilities could also, in the wrong hands, be put to manipulative ends.

Last week, an ethically dubious study conducted by Swiss researchers at the University of Zurich demonstrated the persuasive power of AI. Without informing human participants or the people controlling the online forum on the communications platform Reddit, the researchers seeded a subreddit with AI-generated comments, finding the AI was between three and six times more persuasive than humans were. (The study was approved by the university’s ethics board.) At the same time, we are being submerged under a swamp of AI-generated search results that more than half of us believe are useful, even if they fictionalize facts.

So it is worth reminding the public: AI models are not your friends. They are not designed to help you answer the questions you ask. They are designed to provide the most pleasing response possible, and to ensure that you are fully engaged with them. What happened this week was not really a bug. It was a feature.

Chris Stokel-Walker is the author of TikTok Boom: The Inside Story of the World’s Favourite App.

Minister of Labor Hung Sun-han (洪申翰) on April 9 said that the first group of Indian workers could arrive as early as this year as part of a memorandum of understanding (MOU) between the Taipei Economic and Cultural Center in India and the India Taipei Association. Signed in February 2024, the MOU stipulates that Taipei would decide the number of migrant workers and which industries would employ them, while New Delhi would manage recruitment and training. Employment would be governed by the laws of both countries. Months after its signing, the two sides agreed that 1,000 migrant workers from India would

In recent weeks, Taiwan has witnessed a surge of public anxiety over the possible introduction of Indian migrant workers. What began as a policy signal from the Ministry of Labor quickly escalated into a broader controversy. Petitions gathered thousands of signatures within days, political figures issued strong warnings, and social media became saturated with concerns about public safety and social stability. At first glance, this appears to be a straightforward policy question: Should Taiwan introduce Indian migrant workers or not? However, this framing is misleading. The current debate is not fundamentally about India. It is about Taiwan’s labor system, its

On March 31, the South Korean Ministry of Foreign Affairs released declassified diplomatic records from 1995 that drew wide domestic media attention. One revelation stood out: North Korea had once raised the possibility of diplomatic relations with Taiwan. In a meeting with visiting Chinese officials in May 1995, as then-Chinese president Jiang Zemin (江澤民) prepared for a visit to South Korea, North Korean officials objected to Beijing’s growing ties with Seoul and raised Taiwan directly. According to the newly released records, North Korean officials asked why Pyongyang should refrain from developing relations with Taiwan while China and South Korea were expanding high-level

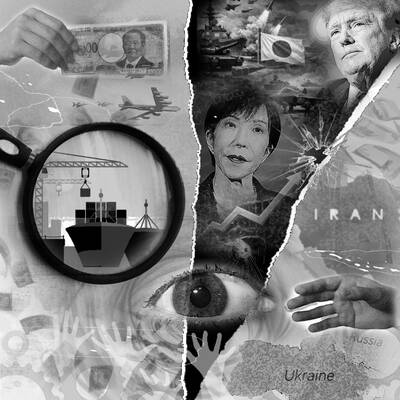

Japan’s imminent easing of arms export rules has sparked strong interest from Warsaw to Manila, Reuters reporting found, as US President Donald Trump wavers on security commitments to allies, and the wars in Iran and Ukraine strain US weapons supplies. Japanese Prime Minister Sanae Takaichi’s ruling party approved the changes this week as she tries to invigorate the pacifist country’s military industrial base. Her government would formally adopt the new rules as soon as this month, three Japanese government officials told Reuters. Despite largely isolating itself from global arms markets since World War II, Japan spends enough on its own