As the International Rescue Committee (IRC) copes with dramatic increases in displaced people in the past few years, the refugee aid organization has sought efficiencies wherever it can — including using artificial intelligence (AI).

Since 2015, the IRC has invested in Signpost — a portfolio of mobile apps and social media channels that answer questions in different languages for people in dangerous situations. The Signpost project, which includes many other organizations, has reached 18 million people so far, but the IRC wants to significantly increase its reach by using AI tools — if it can do so safely.

Conflict, climate emergencies and economic hardship have driven up demand for humanitarian assistance, with more than 117 million people forcibly displaced this year, the Office of the UN High Commissioner for Refugees said.

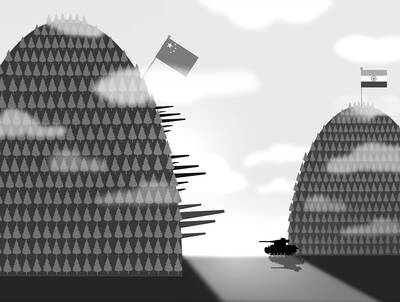

Illustration: Mountain People

The turn to AI technologies is in part driven by the massive gap between needs and resources.

To meet its goal of reaching half of displaced people within three years, the IRC is testing a network of AI chatbots to see if it can increase the capacity of their humanitarian officers and the local organizations that directly serve people through Signpost.

For now, the pilot project operates in El Salvador, Kenya, Greece and Italy and responds in 11 languages. It draws on a combination of large language models from some of the biggest technology companies, including OpenAI, Anthropic and Google.

The chatbot response system also uses customer service software from Zendesk and receives other support from Google and Cisco Systems.

If it decides the tools work, the IRC wants to extend the technical infrastructure to other nonprofit humanitarian organizations at no cost. It hope to create shared technology resources that less technically focused organizations could use without having to negotiate directly with tech companies or manage the risks of deployment.

“We’re trying to really be clear about where the legitimate concerns are, but lean into the optimism of the opportunities and not also allow the populations we serve to be left behind in solutions that have the potential to scale in a way that human to human or other technology can’t,” IRC chief research and innovation officer Jeannie Annan said.

The responses and information that Signpost chatbots deliver are vetted by local organizations to be up to date and sensitive to the precarious circumstances people could be in.

An example query that the IRC shared is of a woman from El Salvador traveling through Mexico to the US with her son who is looking for shelter and services for her child. The bot provides a list of providers in the area where she is.

The IRC said it does not facilitate migration.

More complex or sensitive queries are escalated for humans to respond.

The most important potential downside of these tools would be that they do not work. For example, what if the situation on the ground changes and the chatbot does not know? It could provide information that is not just wrong, but dangerous.

A second issue is that the tools can amass a valuable honeypot of data about vulnerable people that hostile actors could target. What if a hacker succeeds in accessing data with personal information or if that data is accidentally shared with an oppressive government?

The IRC said it has agreed with the tech providers that none of their AI models would be trained on the data that the IRC, the local organizations or the people they are serving are generating. It has also worked to anonymize the data, including removing personal information and location.

As part of the Signpost.AI project, IRC is also testing tools such as a digital automated tutor and maps that can integrate many different types of data to help prepare for and respond to crises.

Cathy Petrozzino, who works for the not-for-profit research and development company MITRE, said AI tools do have high potential, but also high risks.

She said that to use the tools responsibly, organizations should ask themselves, does the technology work? Is it fair? Are data and privacy protected?

Organizations also need to convene a range of people to help govern and design the initiative — not just technical experts, but people with deep knowledge of the context, legal experts and representatives from the groups that would use the tools, she said.

“There are many good models sitting in the AI graveyard, because they weren’t worked out in conjunction and collaboration with the user community,” she said.

For any system that has potentially life-changing effects, groups should bring in outside experts to independently assess their methodologies, Petrozzino said.

Designers of AI tools need to consider the other systems it would interact with, and they need to plan to monitor the model over time, she said.

Consulting with displaced people or others that humanitarian organizations serve might increase the time and effort needed to design these tools, but not having their input raises many safety and ethical problems, CDAC Network executive director Helen McElhinney said.

It can also unlock local knowledge.

People receiving services from humanitarian organizations should be told if an AI model would analyze any information they hand over, even if the intention is to help the organization respond better, she said.

That requires meaningful and informed consent, she said.

They should also know if an AI model is making life-changing decisions about resource allocation and where accountability for those decisions lies, she said.

Degan Ali, CEO of Adeso, a nonprofit in Somalia and Kenya, has long been an advocate for changing the power dynamics in international development to give more money and control to local organizations.

She asked how the IRC and others pursuing these technologies would overcome access issues, citing the week-long power outages caused by Hurricane Helene in the US.

Chatbots would not help when there is no device, Internet or electricity, she said.

Ali also said that few local organizations have the capacity to attend big humanitarian conferences where the ethics of AI are debated.

Few have staff senior enough and knowledgeable enough to really engage with these discussions, although they understand the potential power and effect these technologies might have, she said.

“We must be extraordinarily careful not to replicate power imbalances and biases through technology,” Ali said. “The most complex questions are always going to require local, contextual and lived experience to answer in a meaningful way.”

Taiwanese pragmatism has long been praised when it comes to addressing Chinese attempts to erase Taiwan from the international stage. “Taipei” and the even more inaccurate and degrading “Chinese Taipei,” imposed titles required to participate in international events, are loathed by Taiwanese. That is why there was huge applause in Taiwan when Japanese public broadcaster NHK referred to the Taiwanese Olympic team as “Taiwan,” instead of “Chinese Taipei” during the opening ceremony of the Tokyo Olympics. What is standard protocol for most nations — calling a national team by the name their country is commonly known by — is impossible for

India is not China, and many of its residents fear it never will be. It is hard to imagine a future in which the subcontinent’s manufacturing dominates the world, its foreign investment shapes nations’ destinies, and the challenge of its economic system forces the West to reshape its own policies and principles. However, that is, apparently, what the US administration fears. Speaking in New Delhi last week, US Deputy Secretary of State Christopher Landau warned that “we will not make the same mistakes with India that we did with China 20 years ago.” Although he claimed the recently agreed framework

The Office of the US Trade Representative (USTR) on Wednesday last week announced it is launching investigations into 16 US trading partners, including Taiwan, under Section 301 of the Trade Act of 1974 to determine whether they have engaged in unfair trade practices, such as overproduction. A day later, the agency announced a separate Section 301 investigation into 60 economies based on the implementation of measures to prohibit the importation of goods produced with forced labor. Several of Taiwan’s main trading rivals — including China, Japan, South Korea and the EU — also made the US’ investigation list. The announcements come

Taiwan is not invited to the table. It never has been, but this year, with the Philippines holding the ASEAN chair, the question that matters is no longer who gets formally named, it is who becomes structurally indispensable. The “one China” formula continues to do its job. It sets the outer boundary of official diplomatic speech, and no one in the region has a serious interest in openly challenging it. However, beneath the surface, something is thickening. Trade corridors, digital infrastructure, artificial intelligence (AI) cooperation, supply chains, cross-border investment: The connective tissue between Taiwan and ASEAN is quietly and methodically growing